The Case for AI Guardrails

The Case for AI Guardrails

The Case for AI Guardrails

Issue 119, July 27, 2023

Whatever you think about the U.S. government or our elected officials, it does have guardrails in place to protect its citizens. For pharma and food products, it’s the FDA. For workplace safety there’s OSHA. For mobility safety, it’s the Department of Transportation. For safe investments, there’s the SEC. For consumer protection, there’s the Federal Trade Commission. For AI and emerging tech, there’s nothing.

Setting Boundaries

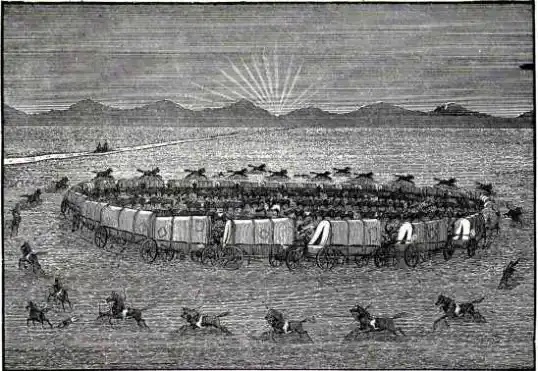

By human nature, we set boundaries made of guardrails to protect ourselves. Sometimes those boundaries encircle us while others have small openings that we hope we can slip through. The westward-ho pioneer settlers’ evening ritual of circling the wagons was real and sensible when facing the unknown and perceived danger. Since we are a highly social species, protection is a survival tool for our families, ourselves and our communities. Recently, however, there is fragmented consensus on what needs to be protected and by whom. To put it into a pop culture context, Greta Gerwig’s runaway success film Barbie hit some visceral touchpoints of what connects us and drives us apart in our asymmetrical views of what’s worth protecting. But we digress.

Let’s get back to the headline maker that is going to change all our lives: emerging tech and AI. We’ve read the news. We’ve had the conversation with colleagues and friends about how AI may change our lives and our work. Even our children’s teachers are already using AI to help them with lesson plans. And every time you turn around there is another app or service that is promoting its shiny new integration with AI. It seems to have taken hold in most aspects of our lives, even when we don’t know it’s there. Now the government, no doubt spurred by public outcry and elected officials’ indignation (yet ignorance about tech), is kicking in and attempting to create guardrails.

AI Fenced In

The Biden administration reached a deal with seven big tech companies Amazon, Anthropic, Google, Inflection, Meta, Microsoft and OpenAI to put more safeguards around artificial intelligence. The effort aims to curb misinformation and other risks that stem from the technology, as Laurie Sullivan reports in Mediapost. But here’s the thing: Who exactly knows how to do this?

As we all have learned on our own or from others, the AI that is available to us so far seems to hallucinate from time to time, if not becoming completely delusional. How do you create a policy or regulation that identifies, manages or stops AI from hallucinating when asked a question? And if it is wrapped within delusion, what do you do with its response? Report AI to the police? The FBI? Close your laptop and office door and hope it just goes away?

We suggest that all the AI horses have already left the barn leaving us high and dry in anticipating what comes next. It is imperative that we find a way, method, and approach that is well-informed. One that recognizes the reality and promise of AI as well as its pitfalls. Most importantly it isn’t just AI that needs guardrails. Those of us who aspire to integrate AI into all aspects of our lives also need the guardrails.

So, back to the presidential initiative, “The White House said that the commitment is part of a broader effort to ensure AI is developed safely and responsibly, and to protect Americans from harm and discrimination. A Blueprint for an AI Bill of Rights was developed to safeguard Americans’ rights and safety, and U.S. government agencies have increased efforts to protect Americans from the risks.” (Mediapost)

The Commitments

So, will this new public/private partnership really ensure that when we chat with a physician we will be interacting with a human professional. Or when we read news reports it will be written with human-infused analysis. Or if your online ads are read by a potential customer, not a potential bot. Or when we call the local police helpdesk that it will be met with a responsive, empathetic human being who is trying to help us?

The tech consortium’s stated commitments are:

- Internal and external testing of their AI systems when it comes to areas of misuse, societal risks, and national security concerns before release.

- Committing to sharing information across the industry and with governments, the public and academics of managing risks.

- Developing robust mechanisms to ensure that users know when content is AI generated, such as a watermark.

- Investing in cybersecurity and insider-threat safeguards to protect proprietary and unreleased model weights (a technical term for the mathematical instructions that give AI models the ability to function).

- Facilitating third-party discovery and reporting of vulnerabilities in their AI systems.

- Publicly reporting their AI systems’ capabilities, limitations, and areas of appropriate and inappropriate use.

- Prioritizing research on the societal risks that AI systems can pose, including avoiding harmful bias and discrimination and protecting privacy.

- Developing and deploying advanced AI systems to help address society’s greatest challenges.

That’s a lot of commitment from seven leading tech companies to protect the world. When we reflect on how these commitments will be communicated to the average person, there are some immediate barriers. First, most individuals don’t like to read legal agreements or documents. With a national attention span disorder, it seems that anything more than a few paragraphs results in disengagement, not to mention a lack of comprehension. Consider how many times you have simply hit agree or submit without reading the 30-page consensual legal relationship you just entered. Once? Several? All the time?

From the mundane to the significant, most of society is not well enough informed, either through laziness or being over-trusting, to know the difference between human and AI-generated content. Except maybe Sam Altman and Satya Nadella. Sound harsh? Maybe. But at 2040 we are critical thinkers and urge everyone in our ecosystem to value that one skill that often makes you unpopular with people who prefer the status quo. Those few pivotal moments of critical thinking pause always pay dividends today, tomorrow and in the future.

Looking Inward

There’s something more profound that we need to consider about why there is a need for AI guardrails. Not to sound pessimistic, but we believe that people (and their governments) often create guardrails to protect humans from themselves. What do we mean? The lack of critical thinking to challenge that all human beings are working for the greater good is naïve. The common sense in us realizes that whether we like it or not, regulations, policies and guardrails ultimately protect us from ourselves. From the inherent behaviors that we often do not consciously recognize — and if we do, we may dismiss them with a simple wave of the hand.

There’s a reason the government has finally entered the tech discourse to demand that social media companies limit and control misinformation. The cynics say that social fanatics do not have the objective assessment skills to determine and investigate if what they are seeing, reading, or hearing is real, a version of the truth or an outright fake. So, we want to protect them from themselves, right? That discourse, peppered with brash statements, bullying and shaming, and questionable articles have yet to result in any formal policy of protection. Words matter, but words matter more when they protect or guide us.

Privacy Protocols

As we have updated you over past issues of this newsletter, the US Government has yet to create and implement a Federal level Privacy Policy. Last summer, we watched a bill gain bipartisan support in the House, but then it fell by the wayside eclipsed by other battles and priorities. This week the Senate takes up a major defense funding bill that includes amendments to ban TikTok and any Chinese-owned company that offers social media in the US. But it falls short of a country-wide privacy policy. Based on the vacuum created by the Federal Government, US states continue to implement their own privacy policies to protect the citizens within their borders. The EU of course is way ahead of its US counterparts in terms of privacy protocols and is forward-thinking with its own set of AI guardrails soon to become law despite the pushback from big tech.

You have to wonder if the US Government is reacting to AI simply in response to the hype. Or are we playing follow the leader as the EU and other countries move forward more proactively?

Technological Determinism

Human beings created AI and all its iterations. In many ways, the back story of the movie The Matrix seems to be playing out in real-time across society and in our government. Consider when Morpheus showed Neo what happened to the world above: “We created AI, society reveled in its accomplishment….” Let’s hope we don’t become electricity-producing batteries to power the AI as humans did in the Matrix.

Our constant 2040 refrain in our newsletters and our book, The Truth About Transformation, is that AI is programmed by human beings and inevitably biased by its creators. It is slowly being revealed that Open AI, like Meta, Alphabet and similar companies all employ hundreds if not thousands of employees whose main job is to correct the AI, review its responses and provide the correct information. In short, companies themselves are finding it is necessary to establish guardrails for their own inventions.

Plus, there are other issues. Chat CPT is time stamped: its information is dated back to 2021. Sure, that was okay in early 2022, but as we approach the fourth quarter of 2023, how much has the world changed? How much additional knowledge have we accumulated in two years? In ten minutes? If nothing else, our lives are on an accelerated schedule with the velocity of information accumulating at the rate of 36 zettabytes per year. Think about that for a moment.

So, we need a structure that guides our technological prowess to ensure we aren’t getting ahead of ourselves causing unintended consequences on society and ourselves. But the bigger threat is whether we are determining tech solutions or whether tech is determining our behavior, subtly but systematically. That’s why as humans we need guardrails as opposed to the tech that we create.

The new AI commitments and those seeking to create AI guardrails must be informed and knowledgeable about the policies they are considering to manage and move beyond the headlines hype. They face the inconvenient truth that the AI we have created has faults as well as advantages. We can determine its future, or it can determine our values and behavior … and that is practically impossible to impose any guardrails on.

Get “The Truth about Transformation”

The 2040 construct to change and transformation. What’s the biggest reason organizations fail? They don’t honor, respect, and acknowledge the human factor. We have compiled a playbook for organizations of all sizes to consider all the elements that comprise change and we have included some provocative case studies that illustrate how transformation can quickly derail.

The 2040 construct to change and transformation. What’s the biggest reason organizations fail? They don’t honor, respect, and acknowledge the human factor. We have compiled a playbook for organizations of all sizes to consider all the elements that comprise change and we have included some provocative case studies that illustrate how transformation can quickly derail.

Connect With Us

What leadership challenges are shaping your decisions right now? Share your experiences and join the conversation.

Go Deeper: Human Factor Podcast

From resistance and identity to the frameworks that help leaders navigate transformation. Available wherever you listen to or watch podcasts.

Kevin Novak

Kevin Novak is the Founder & CEO of 2040 Digital, a professor of digital strategy and organizational transformation, and author of The Truth About Transformation. He is the creator of the Human Factor Method™, a framework that integrates psychology, identity, and behavior into how organizations navigate change. Kevin publishes the long-running Ideas & Innovations newsletter, hosts the Human Factor Podcast, and advises executives, associations, and global organizations on strategy, transformation, and the human dynamics that determine success or failure.